Artificial intelligence is rapidly transforming the educational landscape, offering everything from personalized learning to automated grading. However, this technological boom is no longer an unregulated “Wild West.” The European Union has introduced the AI Act, the world’s first comprehensive legal framework for artificial intelligence, which works alongside existing data privacy laws like the GDPR. Because of the “Brussels Effect,” these rules not only apply to European institutions but also impact universities globally—including those in the United States—if they partner with EU schools, run exchange programs, or use AI systems that affect EU residents.

To help make sense of these complex regulations, here is a breakdown in basic terms of how the new laws categorize AI, what universities are allowed (and not allowed) to do, and how they can prepare.

The AI Rulebook for Higher Education: A “Risk-Based” Approach

The EU AI Act does not treat all artificial intelligence equally. Instead, it uses a risk-based pyramid. The higher the potential for the AI to harm a student’s health, safety, or fundamental human rights, the stricter the rules for governance of artificial intelligence become.

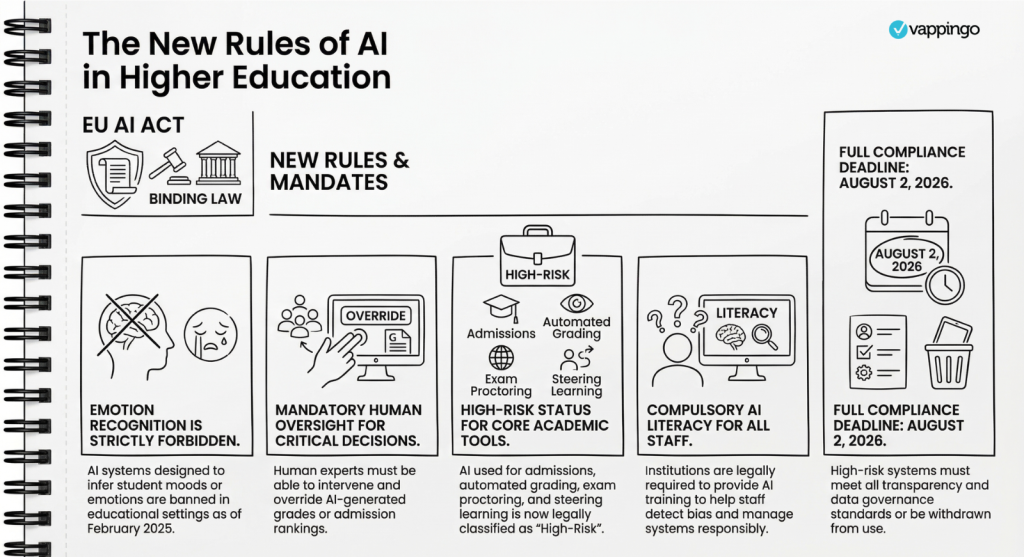

What Universities CANNOT Do (Unacceptable Risk)

The law strictly bans certain AI practices that are deemed an unacceptable threat to human dignity and rights. For universities, the most important prohibitions include:

- Emotion Recognition: Universities cannot use AI to monitor students’ or staff members’ facial expressions, voices, or biometric data to guess their emotions, stress levels, or engagement during classes or exams.

- Social Scoring: Institutions cannot use AI to create a point system that rewards or punishes students based on their social behavior or personality traits.

- Manipulative AI: Universities cannot use AI that deploys subliminal techniques to manipulate students’ behavior in ways that could cause them psychological or physical harm.

- Biometric Categorization: AI cannot be used to categorize students into sensitive groups based on race, political beliefs, or sexual orientation.

What Universities CAN Do—But With Strict Rules (High-Risk AI)

Education is considered a highly sensitive area because decisions made here can alter the trajectory of a person’s life. Universities can use advanced AI, but the following applications are legally classified as “High-Risk” and come with heavy homework:

- Admissions and Selection: AI that reads applications, ranks candidates, or decides who gets admitted or receives financial aid.

- Grading and Assessment: AI software used to grade essays, evaluate learning outcomes, or steer a student’s personalized learning path.

- Automated Proctoring: AI that monitors students via webcam during online tests to detect cheating.

- Student Tracking: Predictive algorithms that flag students as “at-risk” of dropping out.

The Rules for High-Risk AI: Before a university can deploy these tools, they must prove the system is safe and fair. They are legally required to ensure high-quality, unbiased data, keep detailed technical documentation, log the system’s actions, and guarantee strong cybersecurity. Most importantly, public universities must perform a Fundamental Rights Impact Assessment (FRIA) to prove the AI will not discriminate against or harm students. Furthermore, these systems must always have a “human in the loop“—meaning a human must oversee the AI and be able to override its decisions.

What Universities CAN Do Easily (Limited & Minimal Risk)

Not every AI tool requires a massive legal compliance effort.

- Transparency (Limited Risk): If a university uses a chatbot to answer student FAQs or uses AI to generate synthetic images/deepfakes for a project, they simply must follow transparency rules. The main requirement is clearly telling the user, “You are interacting with an AI, not a human.”

- Unregulated (Minimal Risk): AI used for basic administrative tasks, like scheduling classes, allocating rooms, or filtering spam, is generally free from these strict regulations.

The Research Exemption

The law explicitly protects academic freedom. AI systems developed solely for the purpose of scientific research and testing are exempt from the AI Act’s heavy rules, provided they are not released to the general market or used on real students as a finished product.

A Solid Set of Recommendations for Universities

To avoid massive fines (which can reach up to €35 million or 7% of global turnover) and protect both students and institutional reputation, universities should take the following proactive steps:

- Conduct a Campus-Wide “AI Inventory”

Many professors and administrative staff are currently using AI off the books (often called “shadow AI”) to grade papers or optimize schedules. Universities must map out exactly what AI systems are currently being used, classify them by risk level, and identify if they are dealing with external vendors who aren’t compliant. - Mandate AI Literacy Training

The law specifically requires that staff and users possess