We are currently witnessing an “algorithmic arms race” on university campuses. As generative AI tools become ubiquitous, institutions have responded with “AI surveillance”—the deployment of automated detection software to police student work. However, there is a fundamental tension: while schools move quickly to adopt these tools, the technology itself is statistically volatile and often scientifically unproven.

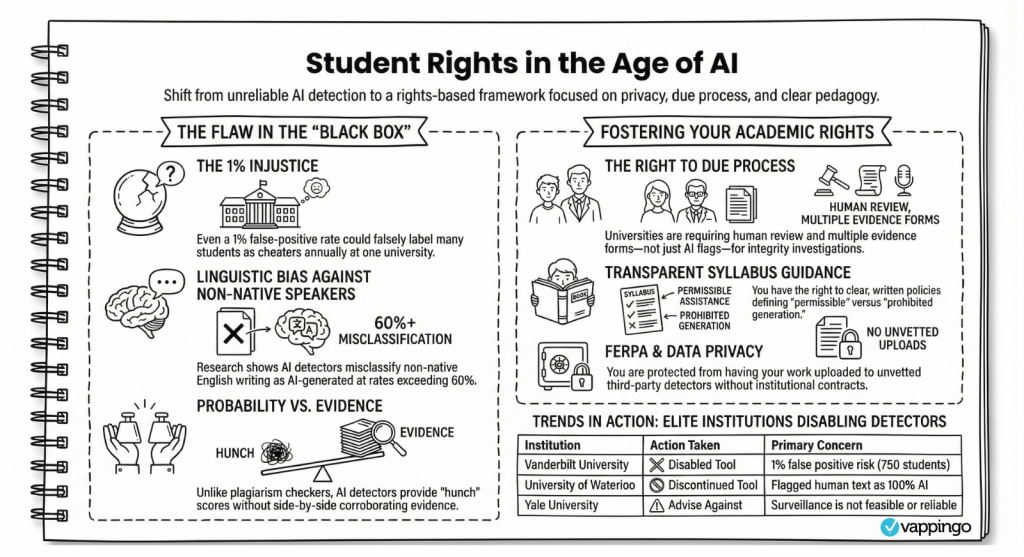

For you, the student, the stakes are high. A “hunch” from a machine can lead to suspended graduations, failing grades, or permanent marks on your academic record. In this landscape, you cannot be passive. Even Turnitin has acknowledged that its initial “1% false positive” claim was inaccurate, yet many institutions continue to use these tools without transparency. You must understand your rights and proactively document your original work to protect your academic future.

AI and Student’s Rights

Right to Human Review

You have the right to ensure that no automated detection score serves as the sole evidence for a sanction. Some universities have stopped using AI detection completely because they are not reliable enough to act as proof.

Right to Due Process

If accused, you have the right to a fair hearing, proper notice, and a meaningful opportunity to defend your process. This includes the right to ask the hearing board to define their “Standard of Proof” and challenge evidence that is purely probabilistic.

Right to Institutional Transparency

You have the right to know which tools are being used. Many detectors are “black boxes” that provide no corroborating evidence for their “flags,” unlike traditional plagiarism checkers that show side-by-side matches.

Right to Equitable Assessment

It is well documented that some AI detection systems penalize non-native speakers. You have the right to be judged by standards that do not unfairly penalize you for linguistic styles associated with being a non-native speaker or being neurodivergent. Understanding these rights is your primary defense as you navigate the patchwork of rules governing the modern classroom.

Student Rights Under FERPA

- Protection from Unauthorized Data Sharing: Students have the right to have their educational records protected from being submitted to unvetted, external third-party AI detection databases. Instructors generally cannot use these third-party tools to evaluate student work unless a formal university contract or purchase order is explicitly in place.

- Protection of Personally Identifiable Information: Even if an instructor attempts to anonymize a student’s work, submitting it to an unauthorized AI detector can still violate the student’s FERPA rights if any personally identifiable information remains present in the text.

- Protection from Third-Party Exploitation: FERPA protections, alongside intellectual property rights, help ensure that a student’s personal data is not submitted to external companies where it could be exposed to data breaches or utilized by vendors to train their own future AI models.

Institutional AI Policies

Institutional stances on AI are currently fragmented. While some schools have issued universal mandates, most defer to individual faculty members. This creates a high-risk environment for students moving between different departmental “rules of engagement.”

Comparison of AI policy frameworks:

|

Policy Type

|

Typical Institutional Examples

|

The “Ground Truth” for Students

|

|---|---|---|

|

Banned

|

Brown, Georgetown, Duke

|

Any use of AI to generate content is fraud. Work must be entirely your own; AI is only for spelling/grammar.

|

|

Limited Use

|

Vanderbilt, Georgia Tech, Emory

|

AI is treated as a collaborator or “writing coach.” Use is permitted for brainstorming, but the student must remain the primary author.

|

|

No Explicit Policy

|

Harvard, MIT, Stanford

|

These schools rely on existing Honor Codes. Crucially, their guidance (like MIT Sloan) explicitly states “AI Detectors Don’t Work,” focusing on authentic voice instead.

|

The “Black Box” Problem

AI detectors do not “read” your work; they perform a statistical analysis of linguistic patterns. They are “hunches” masquerading as forensic evidence.

Technical Failure Points

Detectors rely on two primary metrics that frequently misidentify human writing:

- Perplexity: This measures how predictable a sequence of words is. Because AI is essentially a “super-charged predictive text generator,” it produces text with low perplexity.

- Burstiness: This measures variation in sentence structure. Human writing typically has high “burstiness” (mixing long, complex sentences with short ones), whereas AI tends to be more uniform.

Excellence or Fraud?

In a disturbing trend seen in the Doe v. Yale case, “standard academic excellence” is now being used as evidence of fraud. Yale instructors flagged an exam for having “near perfect punctuation and grammar” as a sign of AI use. You must be aware that clear, formal, and well-structured writing, the very thing you are taught to produce, can now trigger a detector’s “low perplexity” flag. See Examples of People Being Falsely Accused of AI Usage for more information.

Vulnerable Populations

Research from Stanford and Michigan confirms that these tools create a “linguistic penalty” for specific groups:

- Non-Native English Speakers: Because non-native writers often use clearer, more constrained, and less “bursty” syntax, they are misclassified as AI-generated at rates as high as 61% in some studies.

- Neurodivergent Students: Students with OCD or Anxiety often produce highly structured, formal, and consistent prose. These symptoms mirror the low-perplexity patterns of AI, leading to false accusations against students whose “robotic” style is actually a manifestation of their disability.

While some companies claim 99% accuracy, third-party evaluations show false-positive rates ranging from 10% to 35%. Even at a 1% error rate, the impact is devastating. Vanderbilt University noted that out of 75,000 papers submitted, a 1% error rate would result in 750 students being falsely accused. This is why many elite institutions have disabled these tools entirely. See A PhD Student’s Guide to Surviving False AI Detection

Proactive Integrity: Documenting Your Learning Journey

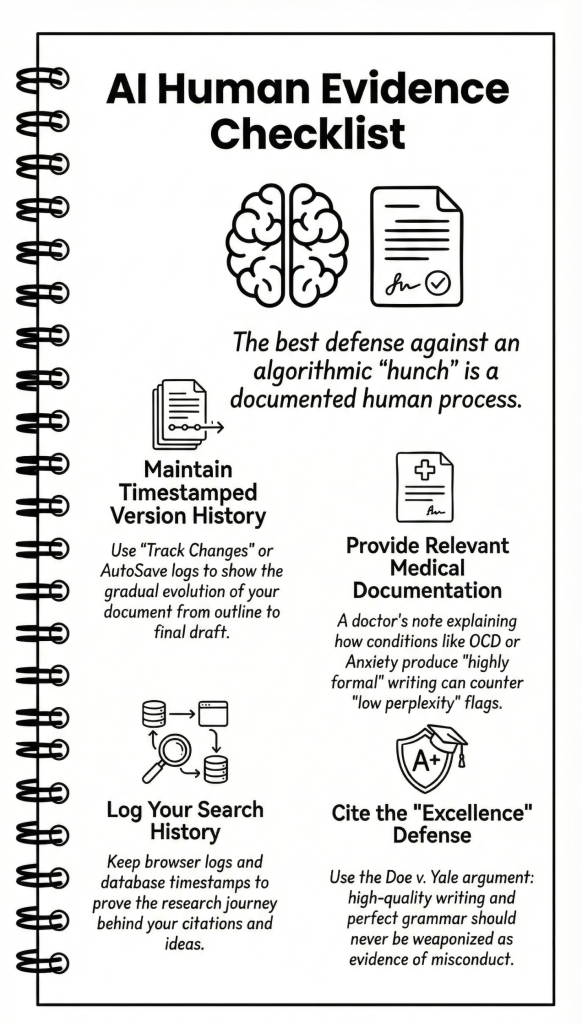

The best defense is a robust “paper trail.” You must prove the process of learning, not just the final product.

- Technical Trail: Always work in cloud-based platforms like Office 365 or Google Docs. These services maintain a “Version History” that records every keystroke and edit. If accused, this proves your essay didn’t appear as a single “copy-paste” block but evolved over hours of labor.

- Scaffolding Evidence: Maintain a “learning audit” folder. Save your initial brainstorming notes, outlines, annotated bibliographies, and every rough draft. This demonstrates the intellectual “scaffolding” that AI cannot replicate.

- The Process Statement: Adopt a “discourse of transparency.” Submit a brief statement with your work explaining how you completed it. Crucial Advocacy Tip: Before submitting, secure an agreement from your professor that a disclosure of AI for brainstorming will not result in a penalty. Normalizing this transparency rewards honesty and protects you from “surprise” flags. See How To Acknowledge AI Usage in Your Essays

Citing AI Collaboration

|

Citation Style

|

Basic Formatting Rule

|

|---|---|

|

APA

|

Cite the AI tool (e.g., ChatGPT) as the author, the version/date, and provide the specific prompt used in your text or a footnote.

|

|

MLA

|

List the AI tool in the “Container” position. Include the prompt used and the date of access. Focus on the “Output” as a generated work.

|

Transparency is your shield. If you use AI as a collaborator, you must acknowledge it according to current standards.

Fighting False AI Allegations

If you face an allegation, you must shift from a defensive posture to an advocacy posture.

- Define the Standard of Proof: Ask the hearing board, “What is your required standard of proof?” Remind them that the University of Pittsburgh and others maintain that “AI detectors are not reliable enough to act as proof.”

- Demand Human Scrutiny: Do not accept a percentage score. Request a “Human Scrutiny” review where a subject matter expert compares the flagged work against your previous human-verified writing samples.

See What You Should Say if You Are Accused of AI Usage

Hearing Evidence Checklist

- Version History: Print or share the timestamped evolution of your document.

- Medical Documentation: If applicable, provide a doctor’s note explaining how conditions like OCD or Anxiety influence your “highly formal” or “structured” natural writing style. This counters the “low perplexity” argument.

- Search History: Provide browser logs from library databases to show the research journey behind your citations.

- The “Excellence” Defense: If flagged for “perfect grammar,” cite the Doe v. Yale case and argue that high-quality writing should not be weaponized as evidence of misconduct.

Maintaining Your Voice

At its core, generative AI is a probability engine. It can mimic the structure of a story, but it cannot replicate the human vulnerability, personal detail, or nuanced reflection that characterizes true learning. Your greatest asset is your unique perspective. AI cannot write about your specific life experiences with the depth that you can. Embrace the process, document the journey, and never let a machine, or a flawed detection tool, replace your unique voice. By being proactive and legally informed, you can navigate the AI frontier with integrity and confidence.

Sarah Moore is the founder of Vappingo, a global editing and proofreading company supporting students and academics across disciplines. Over the past decade, through her work reviewing academic manuscripts, she has developed a focused expertise in AI governance in higher education, academic integrity frameworks, and human-in-the-loop educational systems.

Her recent research examines AI detection bias, regulatory compliance under the EU AI Act, algorithmic accountability, and the evolving legal risks facing universities deploying automated decision-making systems. She writes on the intersection of generative AI, blockchain credentialing, student data privacy, and educational policy reform.