- The difference between RRL and RRS in terms of focus, sources and goals

- How AI tools can give you a massive efficiency boost when compiling your RRL and RRS

- The difference between citation-based AI tools and semantic-based AI tools

- The top RRS and RRL tools for 2026

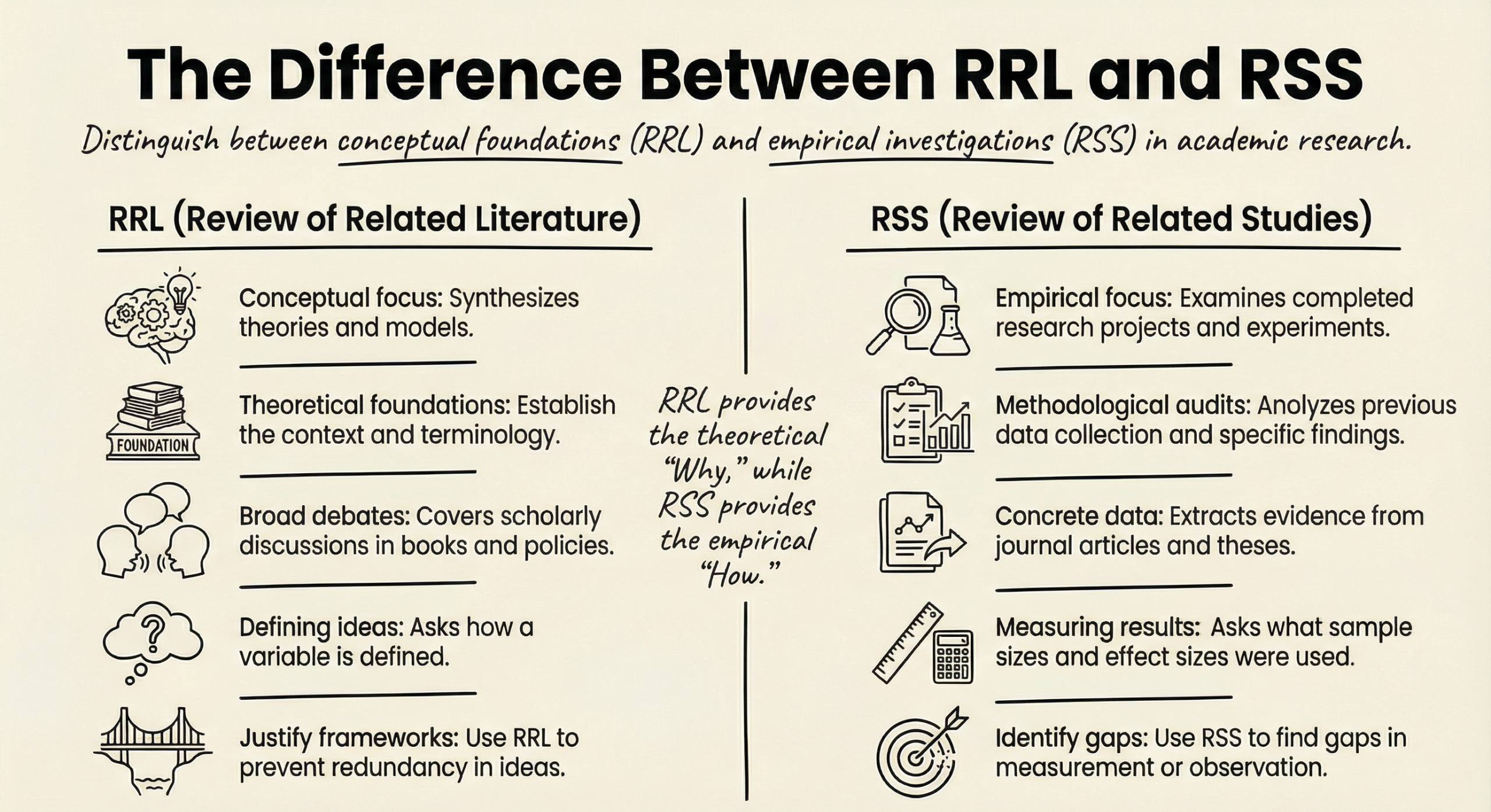

The Difference Between RRL and RRS

Before deploying AI, it’s important that you clearly understand the distinction between RRL and RSS.

RRL tells you the theory; RRS tells you the results.

The Roles of RRL and RRS

|

Feature

|

Review of Related Literature (RRL)

|

Review of Related Studies (RRS)

|

|---|---|---|

|

Primary Focus

|

Theories, concepts, models, and scholarly debates.

|

Empirical work, experiments, and data-driven investigations.

|

|

Typical Sources

|

Books, theoretical essays, policy documents, and conceptual papers.

|

Journal articles reporting data, graduate theses, conference papers, and unpublished research reports.

|

|

Goal

|

To answer: “What do scholars say about this topic conceptually?”

|

To answer: “What have researchers actually measured, tested, or observed?”

|

|

Key Output

|

Establishes definitions, variables, and the theoretical lens.

|

Identifies methodological trends (sampling, instruments, analytic techniques) and gaps in data.

|

Research Topic: The Effects of Sleep Deprivation on Academic Achievement Among University Students

Research Question: How does sleep deprivation affect academic performance in undergraduate students?

Now we clearly separate RRL and RRS.

Example of RRL

In your RRL, you explore concepts and theoretical foundations, not specific studies.

You would cover:

1) What Is Sleep Deprivation?

- Definitions (acute vs chronic sleep deprivation)

-

Recommended sleep duration for young adults

2) Theoretical Links Between Sleep and Learning

- Cognitive Load Theory

- Memory consolidation theory

- Attention and executive function models

- Circadian rhythm theory

3) Conceptual Relationship Between Sleep and Academic Achievement

- How sleep supports memory encoding

- The role of REM sleep in learning

- Sleep and emotional regulation

- Sleep and decision-making

Example RRL Paragraph

Sleep plays a fundamental role in cognitive functioning. Memory consolidation theory suggests that information learned during the day is stabilized and integrated into long-term memory during sleep, particularly during REM cycles. Cognitive Load Theory further proposes that when students are sleep-deprived, their working memory capacity is reduced, limiting their ability to process complex academic material. From a theoretical perspective, inadequate sleep may therefore impair attention, executive function, and long-term retention—all of which are critical for academic success.

Notice what this paragraph does:

✔ Defines concepts

✔ Explains mechanisms

✔ Uses theory

✔ Does NOT cite specific experiments or percentages

That’s RRL.

Example of RRS

- Experimental sleep restriction studies

- GPA correlation studies

- Surveys of student sleep habits

- Longitudinal studies tracking sleep and performance

- Lab-based cognitive testing after sleep deprivation

Example RRS Paragraph

A longitudinal study of 1,125 undergraduate students found that students who averaged fewer than six hours of sleep per night had significantly lower GPAs compared to those who averaged seven to eight hours. Similarly, an experimental study in which participants were restricted to four hours of sleep demonstrated measurable declines in attention span, reaction time, and short-term memory performance. Survey-based research has also shown that students reporting chronic sleep deprivation are more likely to miss deadlines and perform poorly on exams.

Notice what this paragraph includes:

✔ Sample sizes

✔ Measured outcomes

✔ Study design

✔ Results

That’s RRS.

The Clear Difference Between RRL and RSS

| If You Write… | It Belongs In… |

|---|---|

| “Memory consolidation occurs during REM sleep.” | RRL |

| “A 2022 study found students sleeping <6 hours had lower GPAs.” | RRS |

| “Cognitive load theory suggests reduced working memory capacity.” | RRL |

| “Participants restricted to 4 hours showed 30% slower reaction times.” | RRS |

| “Sleep influences executive function.” | RRL |

| “Researchers used regression analysis to measure GPA impact.” | RRS |

RRL explains why sleep should affect academic performance.

RRS shows whether it actually does.

One builds your lens.

The other tests reality.

How RRL and RRS Work Together

After completing your RRL, you might conclude:

Theory strongly suggests sleep deprivation should impair academic achievement.

But after completing your RRS, you might discover:

- Most studies are short-term lab experiments.

- Few studies examine long-term cumulative GPA.

- Most research focuses on medical students, not general undergraduates.

- Very little research explores online learners.

That becomes your knowledge gap.

Example gap statement:

While existing literature establishes a theoretical link between sleep and cognitive performance, there is limited longitudinal research examining the cumulative impact of chronic sleep deprivation on GPA among non-traditional undergraduate students.

That gap emerges only when RRL and RRS are clearly separated.

Why Confusing RRL and RRS Leads to “Revise and Resubmit”

Students often:

- Mix theory and study results in the same paragraph

- Describe one study and call it “literature”

- Discuss definitions inside the studies section

- Fail to identify methodological trends

Committees expect:

RRL = intellectual framework

RRS = empirical map of what has been tested

When they’re mixed, your conceptual foundation becomes unclear.

Using AI to Boost Your RRL and RRS

- Significant Time Reduction: Researchers observed a >50% time reduction in screening tasks.

- Rapid Abstract Review: AI tools led to 5-to 6-fold decreases in abstract review time, with individual abstracts reviewed in as little as 7 seconds.

- The Workload Multiplier (WSS@95%): Using the technical standard of Work Saved over Sampling at 95% recall (WSS@95%), AI automation has demonstrated a 6-to 10-fold decrease in total manual workload.

Citation vs. Semantic Search

Citation-Based Tools (Mapping the Network)

- Pros: Highly reliable; they rely on human-mapped knowledge—the connections researchers intentionally created.

- Cons: They can result in “citation islands”—disconnected sub-fields that never reference each other, causing you to miss entire bodies of relevant work.

Semantic-Based Tools (Analyzing the Text)

- Pros: Excellent for early discovery and, crucially, for bridging citation islands by finding conceptually related work that doesn’t share a reference chain.

- Cons: “Similar” results might occasionally lack the depth required for highly technical sub-fields.

- Documind: Best for bulk interrogation. It uses GPT-4 to interact with your PDF library, allowing you to ask natural language questions across hundreds of papers simultaneously to find specific data points.

- Elicit: The specialist for RRS. It automates the extraction of methodologies, sample sizes, and key findings, providing structured summaries ready for synthesis.

- ResearchRabbit: The king of “path-tracing.” It allows you to “hop back” through your search history, ensuring you never get lost when exploring complex reference chains.

- Litmaps: Superior for Co-Authorship Search and visual customization. It identifies papers based on who wrote them and allows you to customize the axes (references, connectivity, date) to prioritize impactful seminal works.

- Consensus: The best engine for direct, evidence-based answers. It extracts findings directly from peer-reviewed papers to resolve conflicting evidence.

Ethics and Verification

- Never trust, always verify: Manually check every AI-generated citation. AI tools are prone to inventing BibTeX citations for papers that do not exist.

- Search Engine, not Ghostwriter: Use AI to find and triage papers, but never to write the synthesis.

- The Cochrane Disclosure: Per formal research standards, you must include an “AI Use Disclosure“ section in your report, specifying which tools were used and the prompts applied.

- Prohibition of Authorship: Under no circumstances can an AI be listed as a co-author. The human remains the only one capable of actual reasoning and accountability.

- RAISE Principles: Adhere to the Responsible AI use in Systematic Evidence Synthesis (RAISE) standards: ensure your use is ethical, transparent, and fit-for-purpose.