It’s the heart-stopping moment of the digital age: you open your graded essay expecting feedback on your ideas, and instead you see a “cheated” label or a “Highly Likely AI-generated” score. You know you wrote it. You remember the late nights, the messy drafts, the abandoned outlines, and the struggle to find the right words; especially if English isn’t your first language. But the software gives you a cold, yes-or-no verdict: “robot.”

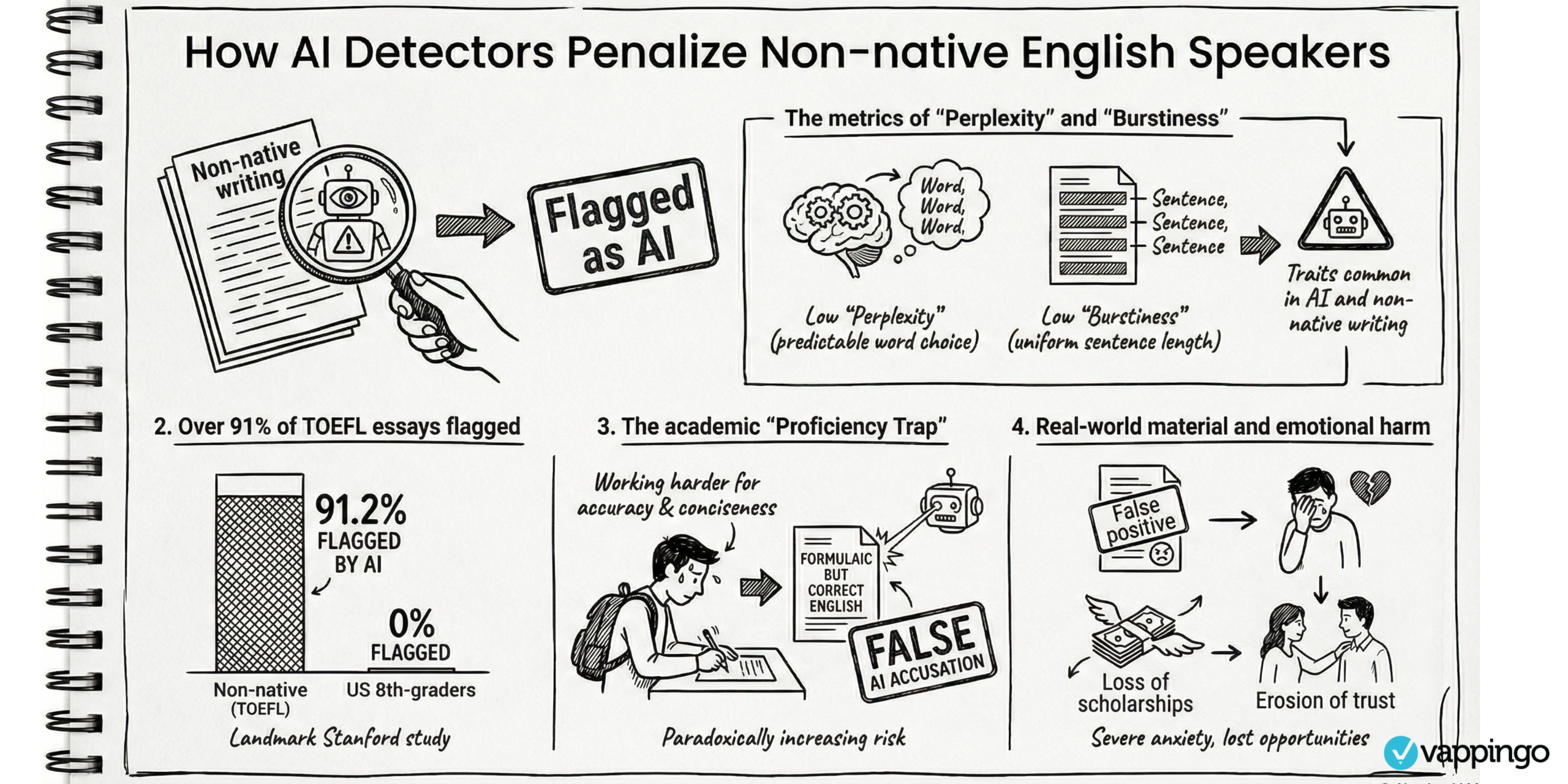

As the use of tools like ChatGPT become normal in classrooms, many educators have rushed to adopt AI “detectors” to protect academic integrity. The problem is that these tools are not neutral truth machines. They don’t “know” who wrote your essay. They look for patterns and make a prediction. And in the rush to stop cheating, some schools have created an algorithmic border that can unfairly target non-native English speakers. This is the proficiency trap: the detector mistakes clear, structured, simpler English for AI writing.

The Stanford Study: A Flaw in the Foundation

A major Stanford University study (Liang et al.) exposed a serious bias in AI detection technology. Researchers tested seven widely used detectors on essays written by native and non-native English speakers — and the difference was huge.

Here are the key results:

- U.S. eighth-grade essays: detectors were “near-perfect” at recognising human writing.

- TOEFL essays (non-native English): detectors wrongly labelled over 61.3% as AI-written.

- Unanimous errors: all detectors agreed (wrongly) that 19.8% of human TOEFL essays were AI.

- The absolute risk: at least one detector flagged 97.8% of human TOEFL essays as “likely AI.”

If a tool flags nearly 98% of human-written essays from one group, that’s not a “small error.” It means the tool is failing in a predictable way. For you as a non-native English speaker, the detector doesn’t work like a precision instrument; it can behave like a demographic filter that treats your writing style as suspicious.

The Proficiency Trap: Why Your “Good” Writing Gets You Flagged

To understand why this happens, you need to know the two main signals detectors look for: perplexity and burstiness.

- Perplexity (the “surprise” factor): This measures how predictable your word choices are. If a tool can easily guess your next word, your perplexity is “low.” AI writing often has low perplexity because it tends to choose very common, safe phrasing.

- Burstiness (the “rhythm” factor): This measures how much your writing varies. Human writing often has bursts—short sentences mixed with longer ones. AI can sound more even and steady.

Now here’s the unfair part: when you learn academic English (especially for TOEFL-style writing), you’re often taught to write in a clear, structured way. You use a safer vocabulary. You follow templates. You avoid risky phrasing because you want to be correct. That style can look “predictable” to a detector, even when the writing is completely yours.

So the more you follow the rules of “good academic English,” the more likely the algorithm may label your writing as AI. Your “good student habits” become the very thing that triggers suspicion.

The 26% Problem: Marketed Accuracy vs. Real Life

Detection companies often advertise accuracy rates of 98% to 99.98%. But there are two key points to take into consideration here.

First, the real-world performance tells a different story.

One of the strongest examples is OpenAI itself. In 2023, OpenAI shut down its own AI classifier because it had a low rate of accuracy. It correctly identified only 26% of AI-written text and still had a 9% false positive rate. In other words, it missed most AI text and still accused some humans.

Independent testing and real-world reports also suggest false positives can be common (often cited around 15% in informal, practical testing). And the weirdest part? Some detectors have flagged famous human-written documents as AI, including reports of the U.S. Declaration of Independence being labelled around 97% AI-generated.

This matters because a detector score should never be treated like proof. It’s not a verdict. It’s a weak clue — and sometimes it’s wildly wrong.

Second, a accuracy rate of 98% to 99.98% is still massively problematic…

“What False Accusation Rate Is Acceptable?”

Tech ethicist Christopher S. Penn asks a brutal but important question:

What is your acceptable rate of false accusation?”

Schools often worry about false negatives (someone cheats and gets away with it). But the bigger ethical problem is false positives: when you get accused even though you did nothing wrong.

Penn’s point is simple: if the consequences are serious (losing a scholarship, failing a course, being suspended), then the acceptable false accusation rate should be close to 0%.

And even tiny error rates become huge at scale. There are about 2.235 million first-time college students in the U.S. If each student writes 10 essays a year, then:

- even a 1% false positive rate could mean 223,500 possible false accusations per year.

- if the rate is closer to 15%, the system becomes a trust disaster.

That’s how you get a culture where students feel guilty until proven innocent; because a software score is treated like evidence.

High-Stakes Consequences Beyond the Classroom

If you’re an international student, a “robotic” label can become more than a grade problem; it can become a legal and life problem.

In the U.S., if you are suspended or expelled, your status in the Student and Exchange Visitor Information System (SEVIS) may be terminated. That can lead to serious visa consequences, including potential loss of legal status.

And it’s not only essays. There’s also a major grey area: translation.

One real example discussed in academic reporting involved a student writing an original essay in Mandarin and using AI to translate it. The detector flagged it as 100% AI. That raises a fairness question: if the thinking and writing were yours, is using AI translation “cheating,” or is it a language tool?

Many policies still don’t make this clear, which leaves you exposed.

Read more: Examples of false AI detection.

“Hallucinations” Show Why This Whole System Is Fragile

There’s another reason detectors don’t solve the problem: AI can generate fake information (often called “hallucinations”). That means you can’t rely on AI output, even if it sounds confident.

We’ve already seen real-world damage in law, where people submitted AI-written documents with made-up citations:

- Noland v. Land of the Free: lawyers were fined $10,000 for filing a brief with fake AI-generated citations.

- Al-Hamim v. Star Hearthstone: the court didn’t sanction the person but issued a strong warning after “hallucinated” cases were submitted.

These cases show two things at once:

- AI can be dangerous if you trust it blindly.

- Using unreliable detectors to “police” unreliable AI adds a second layer of unreliability.

What You Can Do? And What Schools Should Do

The issues and gaps outlined above call for a shift from “policing” to “process.” The most practical solution is not perfect detection; it’s better proof and better assessment.

What you can do: a false detection survival guide

- Document your process: Write in Google Docs or Word Online so you have version history.

- Show the evolution: A doc that appears as a single pasted block looks suspicious. A doc with weeks of edits looks human.

- Keep your scaffolding: Save brainstorms, outlines, rough drafts, and notes.

- Use process tracking tools when helpful: Some tools (like GPTZero’s “Writing Report”) claim to show writing patterns over time. This can help as supporting evidence.

- If you’re accused, walk them through your work: Show drafts, sources, and how your argument developed.

Read more: How to Avoid False AI Detection

What faculty should do: better practices

- Synoptic assessment: Assign work that forces you to connect ideas across the course, so shortcuts are harder.

- Observed tasks: Use supervised writing or structured practical tasks (where appropriate).

- Mini-vivas: Short oral follow-ups can confirm your understanding quickly and fairly.

- AI literacy instead of blanket bans: Teach the difference between “AI as a language aid” and “AI as a ghostwriter.” Make expectations clear.

Toward a More Ethical System

AI is forcing education to make a choice: will schools protect integrity in a way that protects students… or in a way that punishes the wrong people?

If detectors can’t tell the difference between “you used help with grammar” and “you didn’t write this,” then relying on them creates an unfair system. It builds an algorithmic border that blocks global voices, especially non-native English speakers.

The future of fair education depends on a simple idea: protect humans, not just grades. Your writing doesn’t have to be perfect to be real, and you shouldn’t have to prove you’re human just because your English is clear.