The contemporary academic and publishing ecosystem is undergoing a profound structural shift.

Generative AI tools have transformed how knowledge is synthesized, drafted, and reviewed. In response to the explosion of synthetic content, the industry has widely adopted AI detection algorithms, ushering in an era of algorithmic surveillance that has inadvertently created an ethical minefield.

In this high-stakes environment, where authors suffer from intense “AI anxiety” and fear of false accusations, the role of the professional human editor and proofreader has fundamentally changed. They are no longer simply correctors of grammar; they are the ultimate guarantors of authenticity, integrity, and equity.

The “Polished Writing” Paradox and the Perverse Incentive to De-Polish

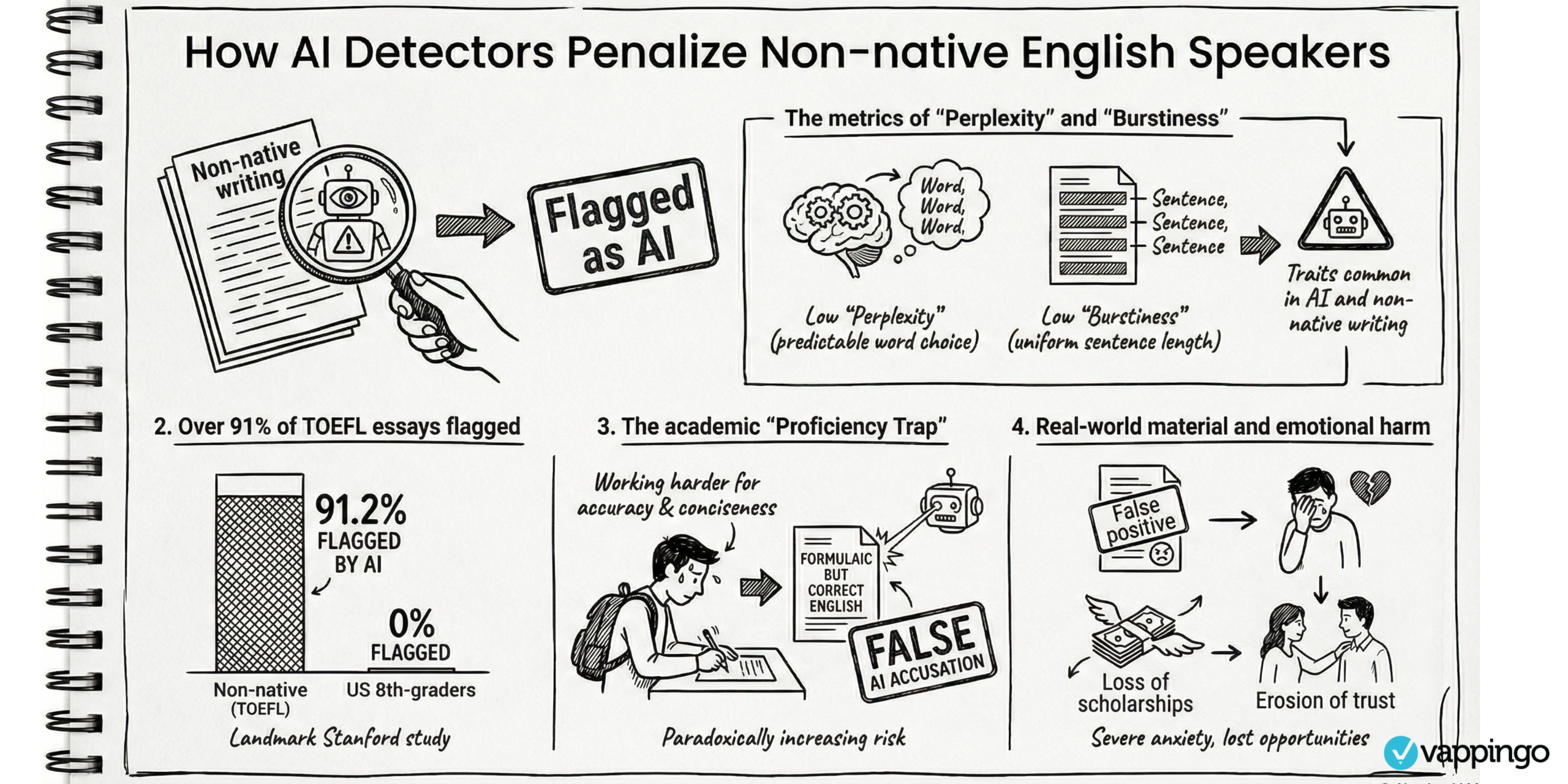

To understand the future of editing, one must understand how algorithmic surveillance evaluates text. AI detectors analyze computational metrics such as perplexity (the unpredictability of word

choices) and burstiness (the variation in sentence length and complexity). Because large language models are designed to generate smooth, predictable, and structurally sound text, detection

algorithms inherently associate “low perplexity” and “low burstiness” with machine generation.

This creates a frustrating paradox for professional editors: the better they are at their jobs, the more likely the manuscript will be flagged as AI-generated. When a human editor refines awkward

phrasing, removes syntactic irregularities, and establishes a consistent narrative flow, they inadvertently lower the text’s perplexity. Consequently, highly fluent and proficient academic

writing is routinely penalized. This dynamic has introduced a dangerous “perverse incentive” in publishing, where writers and editors feel pressured to artificially “de-polish” their work,

intentionally leaving in meandering sentences or minor flaws, simply to prove human authorship to a flawed machine.

Champions of Equity for Non-Native English Speakers

This algorithmic surveillance disproportionately harms marginalized scholars, particularly non-native English speakers (NNES). Studies show that because NNES writers often utilize a more constrained vocabulary and favor uniform, structurally accurate sentences, their writing naturally exhibits the low perplexity that detectors look for. Shockingly, research indicates that up to 97% of NNES texts can be falsely flagged as AI-generated by at least one detector.

Furthermore, when NNES scholars use legitimate assistive tools like Grammarly to meet the rigorous English-language standards of global academia, their writing is smoothed out further, virtually guaranteeing a false positive. In this landscape, human proofreaders position themselves as the “safest choice.” By relying on human experts rather than automated paraphrasers, NNES authors can elevate their manuscripts to publication standards without inflating their AI similarity scores and risking unjust academic penalties.

Read more: ESL Writers and AI Detection

Guardians of Citation Integrity and the Scholarly Record

Beyond linguistic refinement, the future of the editing profession lies in protecting the epistemological stability of the scholarly record. Generative AI frequently produces “hallucinations,” generating plausible-sounding but entirely fabricated “ghost references” that list real authors and journals. Furthermore, AI models are often unaware of post-publication retractions, meaning they routinely incorporate debunked, retracted, or fraudulent studies into their outputs as if they were credible evidence.

Professional editors act as a critical line of defense against this “citational pollution”. As the ultimate fact-checkers, editors verify that sources actually exist, ensure that arguments are ethically supported, and confirm that cited literature has not been amended or retracted. In an era where AI-generated content can easily pass through initial automated checks, human scrutiny is required to evaluate the true novelty, methodological appropriateness, and evidentiary weight of a manuscript.

Navigating Publisher Policies via “Human-in-the-Loop” Integration

The line between human effort and digital assistance, often referred to as “cyborg writing,” has forced academic publishers to rapidly update their ethical guidelines. Major publishers like Elsevier, Springer Nature, Wiley, and Taylor & Francis have universally declared that AI cannot be listed as an author, as machines cannot assume legal or ethical accountability.

However, these publishers recognize the necessity of editorial polishing. Policies now explicitly carve out exemptions for “AI-assisted copy editing” used to improve readability, style, and grammar,

provided these tools are deployed transparently and under strict human oversight. This cements the Human-in-the-Loop (HITL) framework as the gold standard for the future. Editors can leverage advanced AI tools to accelerate their workflow, but they must apply rigorous intellectual judgment to align the findings with ethical standards, mitigate bias, and take full, final responsibility for the published text.

The future of professional editing and proofreading is highly strategic. As algorithms increasingly attempt to police other algorithms, the human editor provides something a machine cannot: verifiable

accountability. By serving as a buffer against algorithmic bias, an enforcer of complex ethical standards, and a trusted partner in the Human-in-the-Loop process, professional editors are essential to securing the future of academic evidence and preserving the fundamental trust upon which scholarly inquiry relies.